MPEG-H Audio vs. “Dolby Atmos” – there is a winner!

Content

In the audio world, there are a few terms that keep popping up, being talked or written about, and yet the consensus is often: this exists, it’s roughly known what it is, but exact details might not be given.

Two terms that often fall into this category are “Dolby Atmos” (Dolby) and “MPEG-H Audio” (Fraunhofer IIS). To put it bluntly, these are the two Next Generation Audio (NGA) competitors! To me, the format war has more of “good versus evil” than “yin and yang.”

Fraunhofer IIS revolutionized how we consume audio content with the world-renowned mp3 format. Dolby is probably the first term that comes to listeners’ minds when it comes to cinema sound. Now, who represents the dark and who the light side of the force, readers are left to decide for themselves here.

Even though I always have fun comparing these two big players, all the discussions don’t change much. Hence the appeal not to get caught up in technical discussions. Because at the end of the day, both parties have the same goal: to deliver the best possible audio experience to people. And in my opinion, the content is much more important than the technology.

The battle of the audio giants

Dolby is probably a bit more widely known due to its presence in cinema since the early 2010s. MPEG-H Audio is becoming more and more relevant and has currently come into focus due to the successful standardization in Brazil.

The competition in said standardization was clearly won by Fraunhofer IIS.

While they were able to fulfill all 24 criteria with their MPEG-H Audio codec, AC-4 only managed 2!

What this was due to and what both terms are exactly about will be looked at in this article. So there is already a winner – only the public doesn’t know about it.

An important disclaimer up front: MPEG-H Audio is an audio codec, while the term “Dolby Atmos” is an umbrella term for Dolby’s immersive sound experience. This content can be transmitted via various codecs (e.g. Dolby Digital Plus (AC-3), AC-4).

Since the use of the term “Dolby Atmos” in the audio context means AC-3 or AC-4, depending on the application area, “Dolby Atmos” will continue to be used in the following for the sake of simplicity, but in quotation marks. Please keep in mind that there may be different codecs behind it.

Next Generation Audio

3D audio or immersive audio has been an integral part of the audio industry for several years. 3D audio brings a new complexity – codecs and formats, various technologies and software. But also creative new territory with different design possibilities and extended playback systems.

MPEG-H Audio and “Dolby Atmos” are so-called “Next Generation Audio” systems. What this basically is can be read here.

This article will go into more depth and make a direct comparison of MPEG-H Audio vs. “Dolby Atmos” easier.

How is object-based audio defined?

Next Generation Audio (NGA), defined in DVB ETSI TS 101 154 enables a variety of new concepts and techniques for delivering immersive audio content and provides greater flexibility in the production and distribution of that same content.

Audio content can be produced, transmitted and played back using channel-based (e.g. stereo, 5.1 surround), scene-based (e.g. Ambisonics ) or object-based approaches.

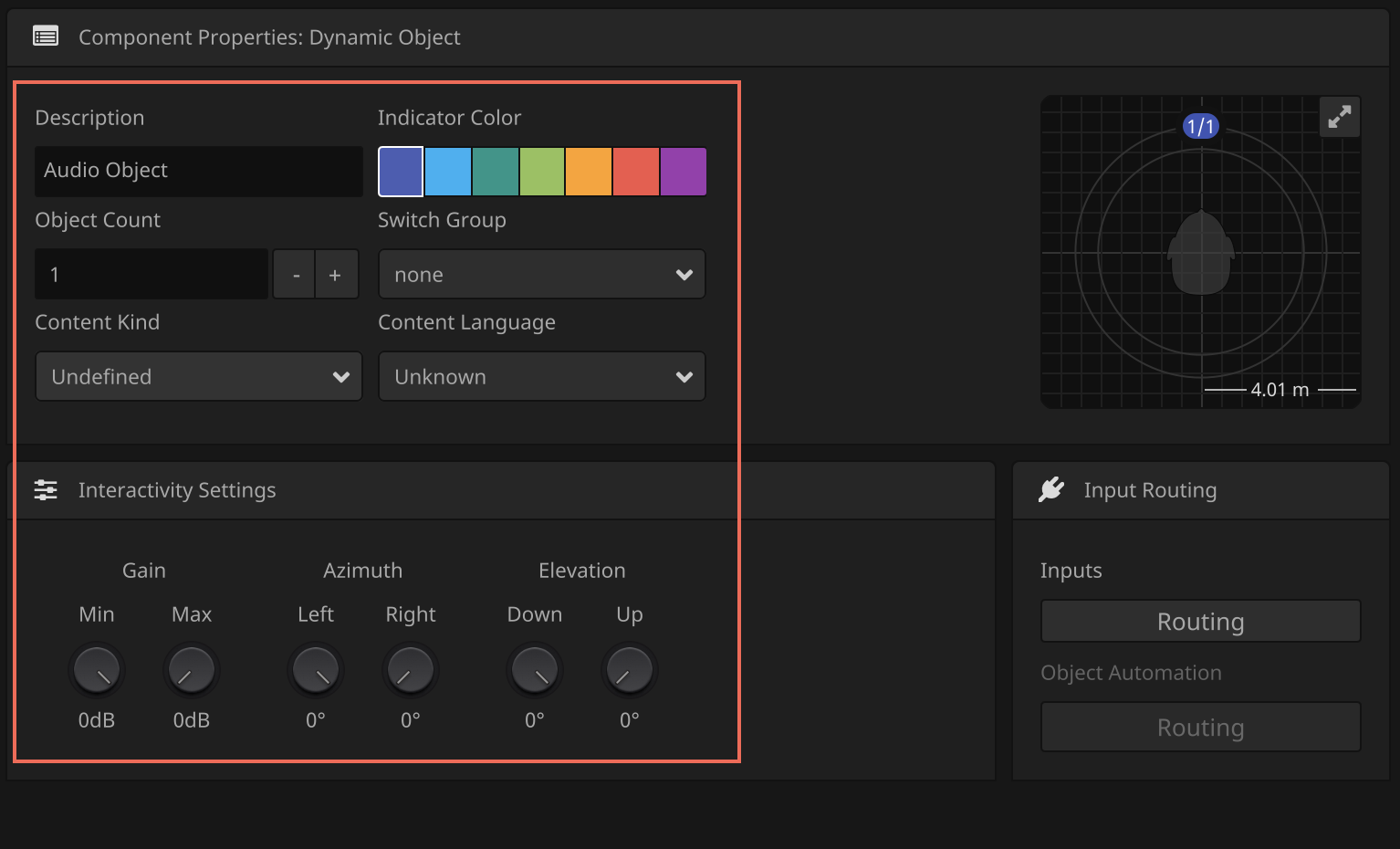

Audio objects are individual sound elements that are arranged both horizontally and vertically using position-related metadata. On the playback side, they can be flexibly rendered to the appropriate playback system (see below).

What do MPEG-H Audio and “Dolby Atmos” have in common?

MPEG-H Audio and Dolby Atmos are object-based systems. Strictly speaking, they are hybrid formats. This means that not only audio objects are supported, but also channels. In the case of Dolby, for example, we are talking about a bed (7.1.2 channel-based) that can be extended with objects.

Object-based audio can be rendered flexibly to different playback systems (speakers and headphones) by transferring audio and metadata.

So it doesn’t matter whether you have a large number of speakers, a soundbar or just very simple headphones: The audio production is played back in the best possible way. This means that there is no longer any need for elaborate tests to try out one’s own playback setup; instead, it can be assumed that the ideal listening pleasure for one’s own situation will always sound out of the playback device.

What is MPEG-H Audio?

MPEG-H Audio is based on the MPEG-H 3D audio standard from ISO/IEC MPEG. You might have heard that before, since that’s the international standardization group. MPEG stands for Moving Picture Expert Group. It is responsible for many of the world’s dominant media standards. Examples would be MP3, AAC, MPEG-2, MPEG-4, AVC/H.264 and HEVC/H.265.

The MPEG-H Audio system is included in the ATSC, DVB, TTA (Korean TV) and SBTVD (Brazilian TV) television standards and is used in the world’s first terrestrial UHD TV service in South Korea.

In Brazil, it is already being used to enhance terrestrial HD TV services with personalized and immersive sound. The first broadcast of TV programming with MPEG-H Audio over ISDB-Tb (SBTVD TV 2.5) took place in September 2019, and MPEG-H Audio has been used in regular broadcasting since November 2021.

MPEG-H Audio has also been selected as the only mandatory audio system for Brazil’s next-generation TV 3.0 transmission service, which is expected to launch in 2024, and for a new transmission standard to be introduced in China. But more on that later.

MPEG-H Audio is thus a next-generation audio codec and supports NGA features such as immersive audio (channel-, object-, and scene-based transmission and combinations thereof). It also enables interactivity and personalization, as well as flexible and universal delivery of produced content.

The MPEG-H Audio standard was developed, among other things, for integration into streaming applications. For example, it underlies Sony’s 360 Reality Audio music format. Thus, MPEG-H Audio is already being used extensively.

And that brings us back to the clash of the titans – here’s the Overview which music platforms use 360 Reality Audio and Dolby Atmos Music.

Audio metadata

“Audio” in MPEG-H Audio is described as a combination of audio components and associated metadata. A distinction is made between static and dynamic metadata:

Static metadata remains constant and describes, for example, information about the type of audio content. Dynamic metadata, on the other hand, changes over time (e.g., in position information). The term metadata sounds a bit abstract, but you can think of it as follows:

In channel-based formats, such as 7.1 surround, each channel has a precise definition of which speaker it is used to drive. With audio objects, however, it is only checked during playback which speaker setup is available at all. That means, the monaural channel of the audio object needs additional information, so that the decoder knows later, where the sound actually has to go. This information is available via the metadata.

MPEG-H Audio Profiles and Levels

The bitstream of the MPEG-H Audio standard supports up to 128 channels or objects that can be transmitted simultaneously to up to 64 speakers.

Since, depending on the application, it is not practical for technical reasons to transmit such a complex bitstream to commercially available devices, various MPEG-H complexity profiles and levels have been introduced. This limits the decoder complexity.

The basis for the ATSC 3.0 standard, for example, is the Low Complexity Profile Level 3, with which 32 audio elements are transmitted within a bitstream and 16 of them can be decoded simultaneously.

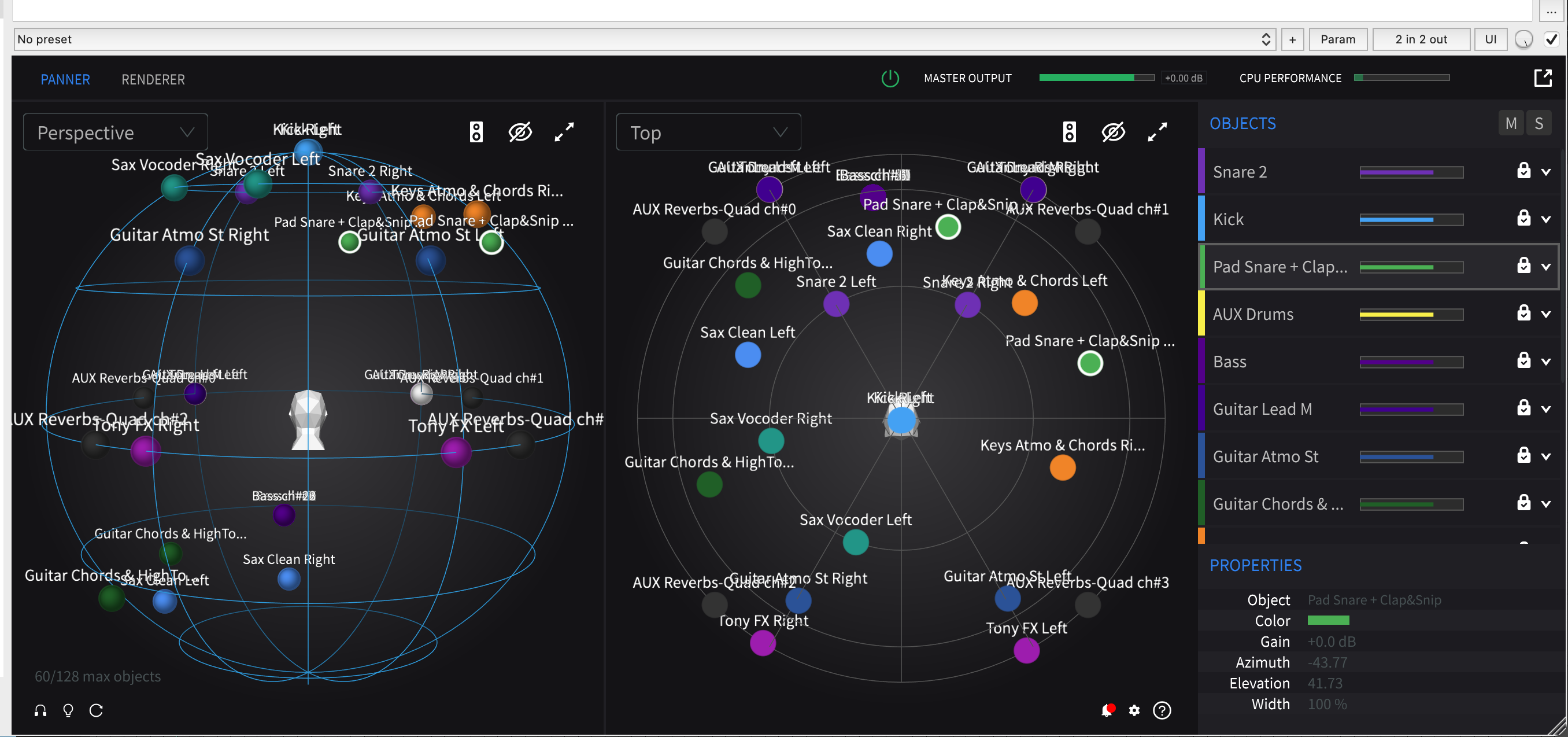

Flexible rendering

Audio objects that are transmitted with (time-variable) position metadata are rendered in the MPEG-H audio decoder using a Vector Based Amplitude Panning (VBAP) algorithm.

VBAP plays a role especially in the panning of objects on 3D loudspeaker layouts. Here, three speakers at a time (physical or virtual, depending on the system) are used to render an audio object with a specific direction of incidence to align with the listener at the appropriate position.

This allows the same audio content to be played back on a variety of different playback systems. For this purpose, an extensive Dynamic Range Control (DRC) and a format converter have been implemented, which adapts the MPEG-H audio bitstream to the corresponding playback system.

Due to this concept, the audio is produced in the largest playback setup, the adaptation to smaller configurations is finally done by a downmix algorithm in the renderer of the playback device. The downmix factors can be flexibly defined during production. Currently, you have to create new audio files and mixes to go from a 5.1 mix to stereo, for example.

This step is now eliminated and you have only one file that works optimally for all playback systems.

Each MPEG-H audio decoder also includes a binaural renderer that enables immersive playback of channel-, object-, and scene-based mixes over headphones. So not only are you free to choose your speaker arrangement, you can also choose your favorite headphones, whether Earbuds, over-ear or otherwise.

Loudness

DRC adjusts the overall level to the device-dependent target loudness, based on the built-in loudness measurement of each element of the audio scene. For mobile devices, for example, this target loudness is -5 to -12 LUFS.

In addition, the MPEG-H Audio System automatically normalizes loudness according to common standards (e.g. EBU R-128, ITU-R BS.1770.4, ATSC A/85 etc.).

What is “Dolby Atmos”?

As mentioned at the beginning, the term “Dolby Atmos” is an umbrella term for Dolby’s immersive sound experience that enables the production and playback of music and audiovisual content. These can be transmitted via various codecs (Dolby Digital Plus, Dolby TrueHD, AC-4).

It was introduced in 2012 as a playback system in cinemas, expanded over the years to the home theater sector, and since 2017 is also available via streaming services such as Netflix. Finally, the introduction of “Dolby Atmos” Music in late 2019 marked the entry into the music streaming market .

Dolby Atmos Music, for example, is based on both the new AC-4 Immersive Stereo (AC-4 IMS) codec (for headphone playback via music streaming services like Tidal) and Dolby Digital Plus Joint Object Coding (DD+JOC) (for speaker playback via Tidal or Amazon Music HD).

However, you actually have to be very careful here, because “Dolby Atmos” is often used as a kind of quality seal. A “Dolby Atmos” file does not automatically have to be based on multi-channel audio. Some smartphones even have a “Dolby Atmos” function. This simply tries to make the stereo sound a bit better. The result is probably a matter of taste, but Dolby was able to make a big name for itself in the consumer sector with this strategy.

AC-4 codec

AC-4 is a Next Generation Audio Codec from Dolby Laboratories Inc. standardized in ETSI TS 103 190 and ETSI TS 103 190-2. It is considered the successor to Dolby Digital (AC-3) and Dolby Digital Plus (EAC-3), see below.

AC-4 was developed especially for use in current and future multimedia entertainment services such as streaming. This codec also supports NGA features such as immersive and personalized audio. In addition, advanced loudness and DRC control for different device types and applications, dialog enhancement, program IDs, flexible playback rendering, and other features.

The AC-4 codec allows the transmission of channel-based and object-based audio content, as well as their combination with high audio quality at low bit rates. AC-4 provides an average of 50% higher compression efficiency than Dolby Digital Plus and is not backward compatible with Dolby Digital or Dolby Digital Plus.

Substreams

AC-4 allows different substreams to be transmitted within an audio bitstream. These substreams can be, for example, different commentaries (mono), a 5.1 bed or a 7.1.4 bed. The audio renderer can combine different substreams (e.g. 5.1 bed + English commentary or 7.1.4 bed + Spanish commentary), which are defined in the so-called “Presentations”. Which of these “Presentations” is finally played back is decided in the decoder depending on the corresponding playback system.

Fehler: Die ID ist kein Bild oder Bild fehlt.

Loudness

In order to handle the wide range of playback devices and systems, flexible Dynamic Range Control (DRC) and loudness management is implemented in AC-4. Four DRC decoder modes are defined by default: Home theater, flat-panel TV, portable speakers, and headphones.

Loudness management in AC-4 includes real-time adaptive loudness processing. The Dolby AC-4 encoder has built-in loudness management. The encoder determines the loudness of the incoming audio signal and can adjust the loudness metadata to the correct value or use multiband processing to bring the program to the target loudness level.

Instead of processing the audio in the encoder, this information is added to the bitstream in the DRC metadata so that processing can take place in the end device according to the playback scenario. In practice, this means that the process is non-destructive. The original audio is carried in the bitstream and is available for future applications.

Dolby Digital Plus (DD+)

Dolby Digital Plus (DD+) is the extension of Dolby Digital and is based on the existing multi-channel standard AC-3. DD+ is mentioned here because DD+ JOC (see below) is still often the basis when “Dolby Atmos” is used.

DD+ was intended to extend AC-3 by a wider range of data rates (> 640 kbp/s) and channel formats (more channels than the maximum 5.1 supported by AC-3). For this reason, a flexible, AC-3 compatible encoding system was developed, known as Dolby Digital Plus or Enhanced AC-3 (E-AC-3).

Dolby Digital Plus can – in addition to extended channel formats with up to 14 discrete channels – transmit conventional mono, stereo or 5.1 surround formats at about half the data rate of Dolby Digital.

Joint Object Coding (JOC)

Joint Object Coding (JOC) makes it possible to transmit object-based and immersive audio content such as “Dolby Atmos” at low bit rates, and thus to render productions with a large number of objects on the listeners’ corresponding playback systems. In this process, objects that are close to each other are grouped together in spatial object groups.

Object groups can consist of objects or a combination of original objects and bed channels, and objects can also be represented in multiple object groups.

Further, a multichannel downmix of the audio content is transmitted along with parametric information that ultimately enables the reconstruction of the audio objects from the downmix in the decoder. The parametric information includes both JOC parameters and object metadata.

An initial version of JOC was developed to provide a backward-compatible extension of the Dolby Digital Plus system to enable the transmission of immersive “Dolby Atmos” content at bit rates such as 384 kbp/s, which are often used in existing broadcast or streaming applications.

To provide direct backward compatibility with existing Dolby Digital Plus decoders, a 5.1 (or 7.1) downmix is used in the DD+ JOC system. Decoding for DD+ JOC is already available in AVRs that support “Dolby Atmos”, among others.

An advanced Advanced Joint Object Coding (A-JOC) tool, including adaptive downmix options and decorrelation units has been implemented in the AC-4 decoder. In addition, the AC-4 A-JOC system also supports all other features of the AC-4 codec.

MPEG-H Audio vs. “Dolby Atmos”

So, respect who has struggled through the technical details and read up to here – or maybe even knew most of it already. But now it gets really exciting – the comparison MPEG-H Audio vs. “Dolby Atmos” follows.

To better understand the differences, let’s start with the similarities:

The comparison

Both MPEG-H Audio and AC-4 are Next Generation Audio (NGA) systems, both are object-based and support immersive audio. Neither system is lossless.

Both NGA systems support interactivity: both MPEG-H Audio and Atmos (AC-4) support “Presets” (MPEG-H Audio) or “Presentations” (Atmos). MPEG-H Audio is currently capable of more interactivity features, such as the creation of interactivity ranks.

MPEG-H Audio supports – at least according to public information – “true” flexible rendering. That is, a production in MPEG-H Audio is automatically calculated by the decoder to the corresponding playback layout. For “Dolby Atmos”, according to the latest information, a distinction is made between DD+JOC and AC-4 in the rendering. “Dolby Atmos” Music, for example, resorts to AC-4 IMS (immersive stereo) for headphone playback, while a DD+JOC file is transmitted for speaker playback.

All countries that have standardized NGA so far – except South Korea and Brazil – have chosen AC-4. In addition, Dolby naturally wins the race of availability in end devices – the “Dolby Atmos” label can be found on countless hardware devices, as mentioned.

However, it should be noted that the use of the term “Dolby Atmos” often makes it unclear for consumers which codec is actually behind it – the “old” codec E-AC-3, or the new codec AC-4?

Standardization in Brazil

For a long time, a frequently asked question was “but this MPEG-H Audio, is it already available with all its features anywhere except in South Korea?”. Now there is an answer for this – yes, soon! After years of competition, MPEG-H Audio has recently won the standardization for the future TV 3.0 television standard in Brazil.

If you want to know more, here are the sources used:

- the info of the SBTVD Forum https://forumsbtvd.org.br/tv3_0/ on the overall project. The forum was commissioned by the government to implement the project.

- the report: https://forumsbtvd.org.br/wp-content/uploads/2021/12/SBTVD-TV_3_0-AC-Report.pdf

- More about the test procedure: https://forumsbtvd.org.br/wp-content/uploads/2021/03/SBTVD-TV_3_0-P2_TE_2021-03-15.pdf

The audio technologys are labeld with group letters. A: AC-4, B: AVSA, and C: MPEG-H. The clear winner is C.

How does broadcast standardization work?

In this case, the Brazilian SBTVD Forum conducted a technical evaluation phase. In this phase, several proposed technologies were compared.

This process started in July 2020 with a tender for the next generation digital television standard (TV 3.0). The RFP covered all components of a modern TV system and included a list of requirements that could only be met by the latest technologies in these areas.

In the field of audio coding, three Next Generation Audio (NGA) codecs were proposed: MPEG-H Audio (Fraunhofer IIS, DiBEG, Ateme, ATSC), Dolby AC-4 (Dolby, ATSC) and AVSA (DTNEL).

How is NGA tested?

The technical evaluation was performed by an independent testing laboratory contracted by the SBTVD Forum and funded by the Brazilian Ministry of Communications.

For the tests, complete and functioning production and transmission chains had to be provided for all codecs. Based on these, various pre-determined test cases were tested to determine which codec could perform which functionalities.

In these tests, the MPEG-H Audio System scored 27/27 on the documentation and 25.5/26 on the technical test cases, winning the audio coding standardization.

The other two codecs submitted (AC-4 and AVSA) scored 0/26 points in the test cases – this result is quite surprising, since Dolby with AC-4 thus performed very poorly in direct competition with MPEG-H Audio.

Is NGA ready for the market?

In addition to the technical evaluation of the proposed technologies, aspects such as availability on the market and licensing conditions were also considered in the selection process.

At the end of the process, the SBTVD Forum chose MPEG-H Audio as the only mandatory audio system for terrestrial broadcasting and streaming services. The TV 3.0 system is expected to launch in 2024.

Conclusion

Wow, that was really a very long article – but hopefully also full of information that could shed some light on the ominous MPEG-H Audio and “Dolby Atmos” darkness.

As it turned out, both systems are object-based, immersive audio codecs. While Dolby scores mainly with its high market availability, MPEG-H Audio dominates from a purely technical point of view.

The standardization in Brazil had a surprising result in the battle of “David against Goliath” – a resounding victory for David. The question now is, will MPEG-H Audio continue to prevail? After all, there are still some countries that have not yet chosen a side… way out in front here is: Germany!

So if you want to know how the journey continues, feel free to contact me without obligation.

back to homepage