Audio Immersion – Job for XR Spatial 3D immersive Sound

Content

A text by Martin Rieger with the kind support of Ana Monte and the German Association for Film Sound Professionals (BVFT).

Immersive audio isn’t just a buzzword; it’s a transformative technology that offers an entirely new level of engagement. This technology delivers an audio experience that is enveloping and realistic, surrounding the listener with sound in a way that transports them into the environment.

To comprehend its significance, it’s crucial to delve into what immersive audio truly entails, how it functions, and the myriad possibilities it unlocks for creative minds in the realm of virtual reality.

Virtual reality sound has ushered in a paradigm shift in the realm of auditory experiences, necessitating a fresh approach from both technical and creative standpoints. Immersive audio creates a three-dimensional auditory experience, allowing listeners to perceive sound from all directions as if they were physically present in the scene.

This is achieved by leveraging human perception—our brain’s natural ability to interpret spatial sound cues—to craft realistic and convincing soundscapes.

This innovation demands immersive audio solutions that can captivate audiences in a three-dimensional soundscape, where the auditory environment dynamically responds to the viewer’s movements, with or without the aid of head tracking.

Immersive audio enhances the experience in music, film, and gaming by creating a three-dimensional sound environment.

What is XR (Extended Reality)?

Extended Reality is the umbrella term for Virtual Reality (VR), Augmented Reality (AR) and Mixed Reality (MR). Spatial Audio plays a very different role in each of these.

But let’s take things one step at a time, because first of all a very important point: Most sound engineers talk about VR, but actually mean 360° videos.

But such productions are only a very small niche of the whole virtual reality. There are two big limitations: 360° videos are linear in time like conventional movies and secondly they only allow a rotation of the viewing direction around three axes: X, Y and Z.

This is known as three degrees of freedom (3DoF). The hype around such productions has already died down and will probably only play a minor role in the future.

What is immersive audio?

So much for the topic of XR. Now let’s move on to the hype topic of “immersive”. There is a great deal of euphoria among film sound engineers, and the topic is a hot topic at the relevant conferences.

“Immersive audio” is often used as a synonym for “3D audio”. Listeners can expect an immersive sound experience that leverages advanced audio technologies to create a realistic and engaging auditory environment.

But where and when can 3D audio actually offer added value? It is important to find out where the new technology works best and what could be promising areas of application.

The possibilities for working with 3D audio are almost unlimited. Most people first think of Dolby Atmos for immersive film or music productions.

But this is only a small part of the potential, and we want to focus on the most relevant ones for BVFT. Spatial sound plays a crucial role in immersive formats, allowing audio to be precisely positioned within a 3D environment for greater realism and immersion.

Immersive audio is important because it helps replicate the three dimensional world we live in, engaging our spatial perception and making experiences more lifelike.

There is a huge jungle of new formats, devices, plugins and distribution channels emerging right now that we may not even know about today. Meaning it may well be worth becoming adventurers yourself and plunging into the wildness.

Recent innovations in immersive audio technology continue to transform the listener’s experience, pushing the boundaries of what is possible with three-dimensional sound.

Immersive audio can be experienced in various formats, including Dolby Atmos, DTS:X, and Auro-3D, all of which utilize object-based audio principles.

Original sound recording

For recording on the road and in the studio, sound engineers need to have a good overview of the existing multi-channel, microphone arrays. These are usually suitable as so-called beds.

However, channel-based formats such as 5.1, 7.1 and 7.1.2 are of secondary importance. Although they are well established in the film sound context, they do not reproduce the sound spherically.

Evenly from all sides, as immersive audio usually requires. This is because the sound is often rotated around the spatial axes during later playback.

Binaural audio is another key technology for capturing immersive sound, especially for headphone playback, as it simulates how humans perceive sound in three dimensions.

At the latest here one stumbles over the term Ambisonics. This format has already existed for a few decades, but it only achieved its great raison d’être in combination with 360° videos.

There are already quite affordable microphones from various manufacturers that have four tetrahedrally arranged capsules. These raw recordings are referred to as A-format and are later transferred to B-format by software, where they are mixed with ambisonics.

The advantages and disadvantages of the format will not be discussed here, but you can learn more here.

The main point is to show that during recording it is sometimes not even clear on which device or platform the later production will end up. So the ORTF-3D or an omni-binaural microphone can be the right choice just as well.

The most important difference to classic film sound is that the boom microphone is more or less completely omitted in 360° videos. Otherwise, the sound man including the boom would be visible in the VR image.

Therefore, a solid radio system is necessary here as well. On the one hand, it is important to record a 3D bed that captures the sound as well as possible from all directions, and on the other hand, it is essential to record the audio objects as isolated as possible. Accurately capturing the position of each sound source is crucial for achieving spatial realism in immersive sound.

In this case, completely different rules apply than in classic film, since it is suddenly decisive who speaks from which direction and how the scene is spatially resolved and how sounds are perceived depending on the recording technique.

In classic film sound, traditional stereo recording uses two channels (left and right) to create a sense of space, but this approach is limited compared to modern immersive audio techniques.

The evolution of immersive audio began with early experiments in binaural recording in the late 19th century.

Sound design & music

Many tools are now available in the popular DAWs for ProTools and Nuendo, but not all of them. You quickly come up against limits in the form of bus sizes or speaker configurations.

The number of speakers—how many speakers are used and how they are placed—plays a crucial role in achieving a convincing immersive sound experience. Therefore, the detour via Reaper can be worthwhile where you are virtually unrestricted and can write your own scripts in case of doubt.

To fully appreciate immersive sound, it is important to use a compatible playback system that supports advanced audio formats and proper speaker calibration. Speaking of scripts, there is almost no way around them in Unity or Unreal at the latest, but more about that later.

In most cases, music is produced in stereo. In the immersive area, there is a dedicated head-locked stereo track for this purpose. This is an audio track that does not change, no matter if the viewing direction is changed.

The problem with this is that now this static soundtrack works against the immersive soundtrack and breaks its localization.

Immersive audio technologies enhance the listening experience by adding spatial depth and realism, making the sound environment more engaging. Using higher resolution audio in immersive formats provides increased detail and dynamic range, further improving the sense of realism and presence.

Therefore, it can make sense to have effects and music delivered in object-based formats. Unfortunately, the production side often chooses a classic approach with music and voice-over.

This leaves almost no room for the original sound to have an effect, since three sound layers have to harmonize with each other somehow, but lay somewhere between diegetic and non-diegetic.

With immersive sound, playing and experiencing music becomes more interactive and dynamic, allowing listeners to engage with audio in new ways.

Therefore, it is better to think simpler and not to overload the sound from the beginning. Most of the time there are visual elements that already challenge the user enough, so there is no need for epic music and an advertising speaker.

Software is essential for creating immersive audio, as it allows sound designers to navigate and place sound elements in a virtual 3D space.

Many digital audio workstations (DAWs) now support immersive audio formats, making it easier for creators to produce immersive soundscapes. Additionally, many popular artists have released immersive mixes, providing fans with new ways to enjoy their music.

Linear / immersive audio mixing

Make no mistake, not only are there no format standards and a motley assortment of microphone options, neither are there standards for measuring loudness.

Here AudioEase and the FB360 workstation have LUT approaches that attempt to measure the sound field signal, at least for 360° videos. The most important thing is that the mix is not too loud and causes problems later in the decoder when binauralizing or distributing to speakers.

Sounds easy to say, but in many cases, it is difficult to anticipate, because you absolutely cannot rely on levels.

Also, you have to say goodbye to the illusion that with 3D immersive audio, the sound will always sound exactly as you imagine it.

For example, Facebook and YouTube use different HRTF models for binauralization, which means that one and the same mix sounds significantly different on different platforms. This affects not only the timbre but also the localization and mixing ratios with the head-locked portion.

Speaking of head-locked, this already mentioned optional stereo track is mostly used for music, but can also be used for voice-overs.

In-head localization makes it clear to the user that the person cannot be localized and thus cannot be located in the scene. Nevertheless, it can be irritating for people to hear a voice and not be able to locate it to a person.

In a classic film, no normal viewer would be irritated by this. In VR, however, other laws apply because you are part of the scene yourself.

So here, too, you have to question mixing decisions that have been made for ages in moving images. Channel-based workflows in stereo or surround are taught by default, but cannot be adopted for spherical audio mixes.

In traditional surround sound, audio is distributed among multiple speakers arranged around the listener in the horizontal plane, creating a sense of directionality.

With stereo setups using only two speakers, sound appears to come from between the speakers at ear level, but this configuration is limited in creating a truly immersive experience compared to formats that use multiple speakers and height channels.

In addition, listening habits also play a big role here. In the meantime, the presence of the clip is almost preferred to the naturalness of a boom microphone.

In the same way, female listeners first have to get used to externalization (the opposite of in-the-head localization), i.e. the feeling of really hearing a person from the outside with headphones.

In this case, speech sounds much more spatial, just as one knows it from reality, but not as one knows it from movies.

When mixing for immersive sound, metadata can include a simple description of the position and movement of sounds, allowing for dynamic and spatial placement that enhances realism.

So, on the contrary, here the stereo sound is actually considered false sound, which can break the immersion that you tried to build with the image.

There are very few good reasons to resort to static sound in a virtual reality sound experience, and very obviously shows that the potential of the medium has been far from exhausted here. Here more thoughts on it.

Enhanced realism in immersive sound accurately mimics real-world acoustics, including distance-based volume changes and room reflections. Immersive audio also differs from traditional audio formats by adding a vertical dimension, creating a more complete and realistic soundstage.

Interactive Game Audio Mixing

Let’s just get to the point that actually makes the XR theme so powerful. And that is something that the fan of the linear moving image will probably not like at all: namely interactivity.

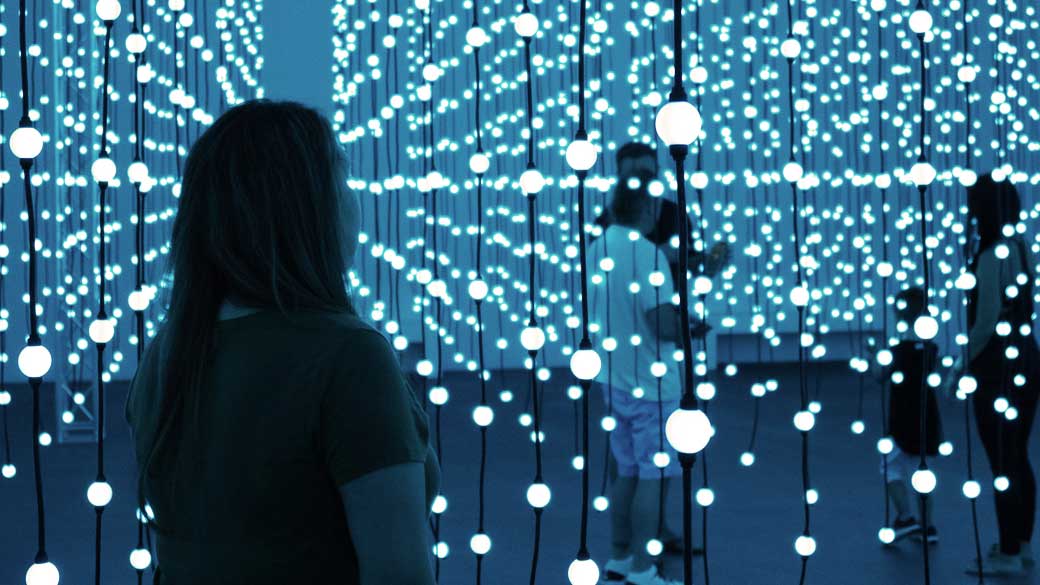

Here we quickly find ourselves in the world of 3D models and game engines. Interactive audio installations are a great example, where sound reacts to user movement or input, creating engaging environments.

But here comes the good news: there is a job description that is very close to the requirements here: Game Audio Designer. This may not have much to do with classic film sound, but the boundaries are becoming increasingly blurred.

Just because you’ve learned game audio doesn’t mean that you can work in sound for gaming. And just because you learned film sound doesn’t mean you have to do film. Sound for XR is somewhere in that gray area.

The biggest difference from classic film sound is that instead of a longer audio file that runs constantly from start to finish, so-called audio assets are now delivered.

These are individually attached to game objects in the game engine. These can be, 3D model in space, characters or linked to events.

In interactive audio, sound objects are used to represent individual audio elements that can be manipulated and positioned dynamically within the environment. In movie audio, we know exactly when a person walks through the door, for example, and we can add the appropriate door sound and reverb.

Unfortunately, in interactive applications, we rarely know exactly when the event will occur. Therefore, the door sound asset is linked to the game object door.

As soon as a character steps through the door, the stored sound is played. Sounds simple, but for credibility a few steps are missing – not only the character’s, but also reverberation algorithms for the two rooms separated by the door have to be defined in advance.

Besides, you don’t want to hear the same door sound every time, so you usually deposit a small palette of sounds directly. Or write a script that pitches the door sound higher or lower depending on how hard the door is slammed.

As you can see, this is not a perfect mix, as with linear audio productions, but trial and error. Since most game engines are usually only quite rudimentary in their audio functions, middlewares come to the rescue here.

These extend the range of functions and enable an interface to the project without having to make changes to the project itself, which pleases the programmers who usually still have to work on the same project in parallel.

Interactive audio installations allow sound to react to user movement or input, creating engaging environments. Immersive audio allows sound designers to place audio objects dynamically within a 3D space, enhancing storytelling in films.

In gaming, immersive audio plays a critical role in enhancing realism by helping players locate enemies and navigate environments.

Audio Objects in Immersive Audio

At the heart of immersive audio formats like Dolby Atmos are audio objects—individual sounds or elements that can be precisely positioned and moved within a three-dimensional space.

Unlike traditional audio tracks that are fixed to specific channels, audio objects are independent and can be manipulated in real time, giving sound designers unprecedented creative control.

Audio objects can range from a single instrument in a music track to a complex sound effect in a film or game. Each object carries its own properties, such as volume, pitch, and spatial location, allowing for a rich and engaging listening experience.

By placing audio objects throughout the immersive environment, creators can simulate the way we naturally hear sound in the real world, with sources coming from all directions and distances.

This approach enables the creation of soundscapes that are not only more realistic but also more dynamic and interactive. For example, in a virtual reality experience, audio objects can respond to the listener’s movements, enhancing the sense of presence and immersion.

The use of audio objects is a defining feature of immersive audio, empowering creators to craft experiences that go far beyond the limitations of traditional stereo or surround sound.

Working in immersive audio

When developing an XR story with spatial audio, you should think about how to use sound to drive the story. So to get started you have to rethink audio in general.

Immersive audio opens up new possibilities for the audio experience, allowing creators to craft realistic, enveloping soundscapes that fully engage the listener.

The immersive sound experience, enabled by technologies like Dolby Atmos, DTS:X, and binaural audio, creates a three-dimensional environment that surrounds and transports the audience.

There are so many workflows that you do with just mono or stereo sound that don’t work in XR with spatial audio. Because in XR, you’re not just looking in one direction and the sound can direct your eyes.

There is only a manageable amount of advanced training. However, more and more universities are recognizing the need and are already working on new concentrations with immersive audio and labs are being expanded.

Related associations like the AES and VDT have similar offerings of workshops and webinars.

It’s worth taking a look at what content is already available. What XR experiences there are with spatial audio that work well.

And then from there, maybe you can start developing your own stories or thinking about your own ideas. Because chances are very good that you have a vision that no one has had before in terms of sound, so that lowers the barrier to entry.

Immersive audio is rapidly transforming the way we experience sound, with applications spanning entertainment, gaming, virtual reality, and beyond.

Additionally, accessibility features in immersive sound improve experiences for visually impaired users by using directional cues to describe environments through sound.

Sound design must be rethought

As developments continue at a rapid pace, it is very difficult to predict the future. Recent innovations in immersive audio technology are transforming the listener’s experience with three-dimensional sound, showing that the field is still evolving and holds significant potential.

However, it is definitely worthwhile as a sound person to think not only about his or her department. This makes communication with other creatives or technicians easier and also helps to broaden one’s own horizon in terms of sound.

So an education in media technology with a focus on sound can be a good foundation here. It’s no use having all the specialized knowledge about immersive audio if you can’t communicate to other people why it’s important to them.

Many audio colleagues are currently considering building speakers on the ceiling and upgrading to Dolby Atmos in the hope of attracting new clients.

But in reality, the advertising promise, or any “return of investment” at all, will occur. Why would a customer suddenly spend significantly more money on an audio product that probably would have worked just as well in stereo?

And if everyone suddenly offers 3D, there is an offer on the market of which it is not at all clear whether it can be covered by music and movie demands at all.

For home listening, sound bars with upward-firing speakers and integrated surround sound technology now make immersive sound more accessible, emulating multi-channel formats like Dolby Atmos without the need for extensive speaker setups.

That’s the crux of it, most sound colleagues just want to stay in their studio, keep using their favorite DAW and then somehow the jobs will come. But that doesn’t work in the XR and immersive audio world.

Often, the additional work involved in 3D audio production is disproportionate to stereo. And here, too, people prefer to spend their budget on the visual part rather than on the sound. After all, one has a lot to do with fancy smart glasses.

That’s why it’s time to turn the tables: Get out of the sound comfort zone and think for yourself what can be an exciting application in terms of XR – it’s worth it.

Therefore, above all, a different mindset is required, as you’d call it nowadays.

Dolby Atmos, DTS:X, and Sony 360 Reality Audio are leading industry standards in immersive sound technologies. Spatial audio platforms like L-ISA and d&b Soundscape deliver pinpoint sound accuracy through advanced speaker mapping.

Development and professional situation

Moviegoers are willing to pay a good price for a surround sound experience. But whether stereo or surround, at the end of the day it’s a nice feature. You don’t need to expect to suddenly charge more just because you’re now mixing in 3D – for a movie or music that works mostly well in stereo, too.

Does that mean 3D audio won’t be a big priority in the future? Of course not! Therefore I would like to mention another extreme example, which we like to laugh at, but still gives us hope: 8D Audio. Here find the best detailled article.

But the short version is: Someone came up with the idea to run a song through a spatializer and let it circle endlessly around your head. Sounds absurd, and to our trained ears it is.

And yet, here millions of people have been reached with 3D audio content and the click numbers are in the nine figures. This shows how good it can be to think unconventionally for a change and do things that you might be reluctant to do.

It’s not enough to buy a 3D spatializer and think you’re doing immersive audio now. That’s why there are still a lot of people missing in the industry who really have years of experience.

You have to remember who we are mixing for and why. Do we want a 3D mix that can be shown to colleagues with a clear conscience (Dolby Atmos would be the choice here), or do we want to reach the consumer (8D Audio shows that it can be done).

The truth is probably somewhere in between, so it’s time to harness the potential from both worlds and find the added value of immersive 3D audio.

The typical approach is to use 3D audio where surround has already worked well. It’s probably no longer a secret that over a thousand feature films have already been mixed immersively in Dolby Atmos.

This fact alone is remarkable for the sound. While the listening experience can currently be enjoyed almost exclusively in cinemas or friendly studios, the three-dimensional mix will soon also increasingly find its way into the living room at home via soundbars. Clever algorithms with virtual speakers make it possible.

Streaming platforms like Apple Music have played a significant role in popularizing immersive audio by supporting formats such as Dolby Atmos, making these experiences more accessible to consumers.

Or even simpler: in virtually every household there are headphones that make three-dimensional audio playback in the form of binaural stereo accessible to consumers.

As a listener, you get more and more the feeling that you’re not just watching a movie, but that you’re part of the action. Mixers can also enjoy the improved transparency, since the spatial distribution of the different sound levels in the room means that fewer compromises have to be made than with stereo.

Streaming services like Apple Music, Tidal, Netflix, and Amazon HD now feature immersive audio categories that captivate listeners. Looking ahead, the future of immersive audio is expected to include integration with augmented and virtual reality, further enhancing the sense of presence and realism.

Historical development and today’s situation

Artificial head stereophony has been a popular recording method for playback on headphones for decades. The vision behind it is to reproduce the acoustic environment as realistically as possible for humans.

Put simply, it can be used to trigger emotions, feelings of presence and perceptions that are deeply connected to one’s own experiences – more immediately than mono could, for example, because a level of abstraction is omitted and is easier for our brain to process.

That’s why the keyword immersion, i.e. immersion in a virtual world environment, describes it quite well.

Currently, however, there is a lot of momentum in the topic again, since sound is now also becoming more accessible as a three-dimensional event for a larger audience with loudspeakers.

Early immersive audio setups often used four speakers to surround the listener, but modern systems have expanded far beyond this. Advanced formats now incorporate height channels and a dedicated height layer, with speakers positioned above the listener to create a true 3D sound environment and enhance spatial accuracy.

Furthermore, additional technologies such as head trackers, data glasses and real-time renderings are popping up in a wide variety of areas, giving us an increasingly realistic impression of hearing as we are used to in our natural environment.

Immersive audio systems typically require multiple speakers placed around the listener, including overhead speakers for height channels. Higher-Order Ambisonics capture and reproduce 360-degree sound fields for live events and large-scale installations.

Combining immersive audio with haptic feedback can create more engaging and realistic experiences, allowing users to feel the sound. Haptic wearables enhance immersion by allowing users to feel sound vibrations, which is beneficial for gaming and for those with hearing impairments.

Summary and conclusion

In this age of rapid technological advancement, topics like artificial intelligence, voice assistants, blockchain, link id=”3246″ text=”Smartspeaker”] etc. might seem far removed from the realm of immersive audio.

However, these buzzwords are not to be dismissed, as they are becoming pivotal in the ever-evolving field of immersive sound especially for sound engineers. The fusion of these technologies is shaping the future of audio experiences.

While it might initially appear abstract, it’s important to recognize that sound is a versatile, cross-cutting technology that transcends the boundaries of immersive audio. It’s a tool that can seamlessly integrate into numerous domains beyond the auditory realm.

As sound engineers, it’s our prerogative to embrace these advancements, step outside our comfort zones, and explore uncharted territories in audio innovation. The future of music is not confined to three dimensions; it’s an expansive soundscape limited only by our imagination and creativity.

Find out how they sound here! So contact Martin Rieger now without obligation.