Dolby Headphone: Dolby Atmos Headphones with Spatial Audio Have This Problem

Content

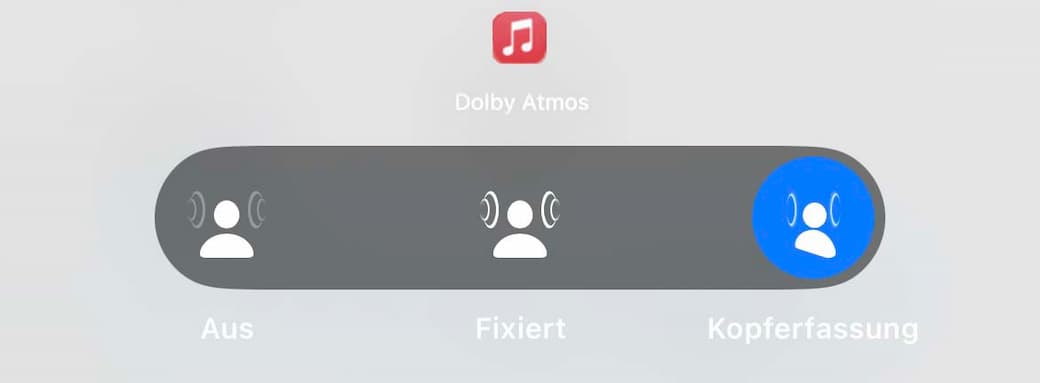

Apple incorporates this exciting 3D audio technology into all its headphones, i.e. Airpods Pro, Airpods Max and the standard earbuds Airpod! I’m really happy with the implementation. It is quite clear what you are listening to (stereo or 3D) and in what way. Nevertheless, Apple is making a crucial mistake here.

In this article, we’ll take a look at 5 different ways to perceive sound with Apple’s 3D headphones. You will get introduced to headphone head tracking, including Dolby Head Tracking, and understand the real value of spatial audio.

-

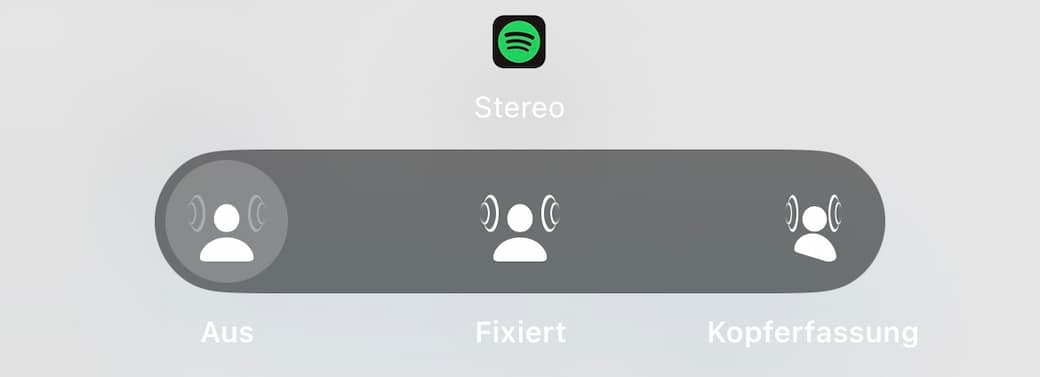

Input Stereo: 3D off, headphone headtracking

-

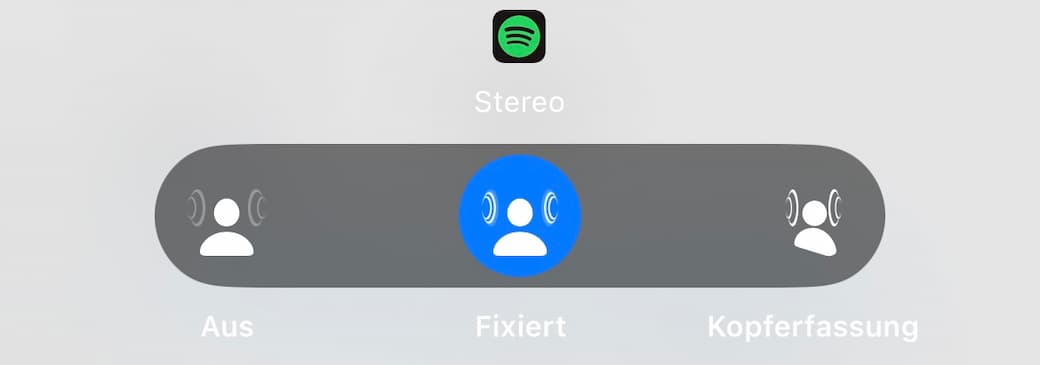

Input Stereo: 3D on, headtracking off

-

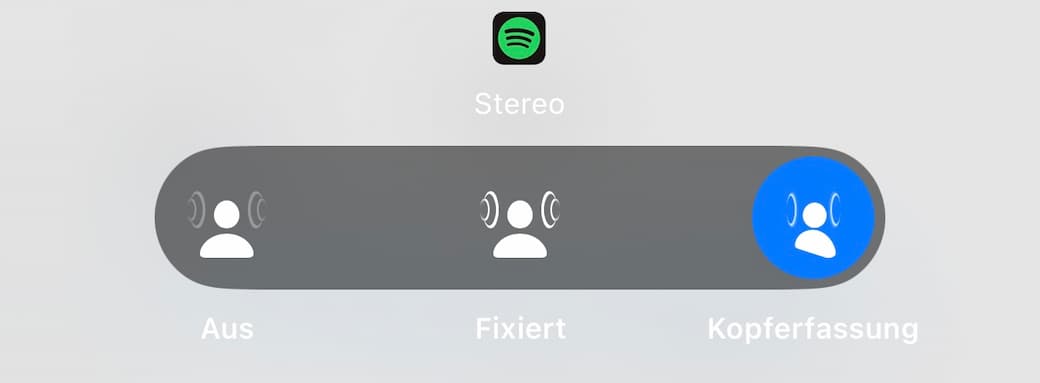

Input stereo: 3D on, headtracking on

-

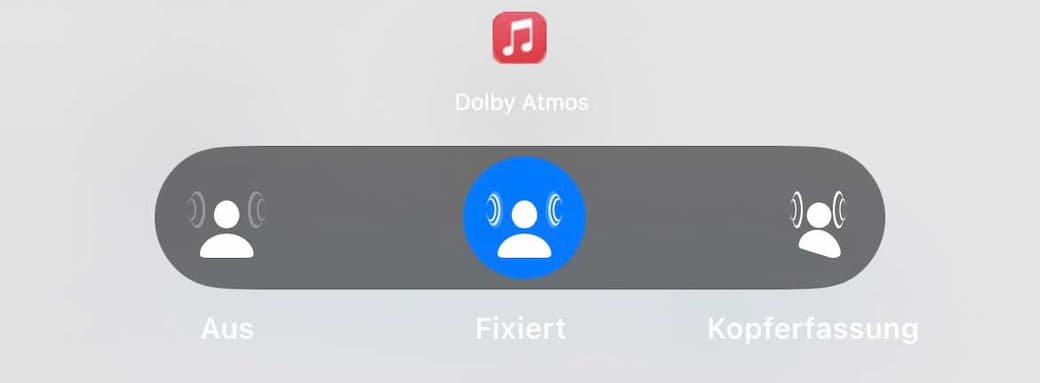

Input multichannel: head-tracking off (Dolby Atmos)

-

Input multichannel: head-tracking on (Dolby Atmos)

Stereo input is off

The default option of the Apple AirPods is that the 3D function is deactivated. Here, the user experiences conventional stereo or mono sound without any immersive soundscape.

One could disagree and say that stereo can certainly allow for personalized spatial audio. Of course, you can create a certain depth as virtual space with reverb effects and play audio with a certain spatiality, but that doesn’t have much to do with 3D audio or virtual surround sound.

We don’t perceive the sound around us as proper personalized spatial audio, but only in our ears.

All headphones and earbuds follow this format. This setting represents the classic audio experience that most of us have been used to for years. So it has nothing to do with Airpods products – any earphones can do that.

This feature is called in-head localization. It’s a phenomenon that I explain in more detail here.

This only occurs using headphones or buds, and we have become very used to listening to it. Now, I’m going to say: it’s actually a problem. In the course of the article, you will realize why.

Stereo input is fixed

If the stereo input is set to “fixed”, the ear headphones experience an artificial spatial extension. This means that the sound that is normally perceived directly in the head and ear, now appears to be slightly further away. This is intended to compensate for the usual in-head localization and make the sound even more natural.

Normal stereo or mono files are used, such as those we consume in everyday life in the form of music, video, or podcasts, but with enhancements similar to those provided by Dolby Headphone technology. The output is again stereo, which has been artificially enhanced. This is also known as upmixing, Apple calls it “stereo to 3D audio” or “Spatialize Stereo”.

Strictly speaking, you don’t need special 3D headphones for this feature because this conversion can simply be generated by the software on your phone app/device. So it has nothing to do with 3D audio formats or the iPhone.

However, you can’t adjust the sound. Wherever you look, the sound experience remains the same – unlike the next generation of modes.

Stereo input through head tracking

By detecting head movements, a technology initially developed by Lake Technology, the sound is dynamically adapted to the position of the head. This function additionally enables the sound system with a more precise spatial perception, as the sound interacts in real-time with the listener’s head movements.

More about head-tracking audio.

Now it sounds as if there are two speakers in the room in front of you and you are sitting in the sweet spot. Ideally, this is an equilateral triangle of 60° with you as the listener in the middle. Your brain forgets it’s wearing headphones.

It requires a pair of special headphones that can connect the sound to the movement of your head. However, only mono/stereo material is used as input. This is placed in a virtual 3D scene and moves around you when you move. It creates the illusion of audio being anchored in space.

I think it’s exciting to have different ways of consuming the normal stereo content that we know from our other devices and everyday lives. It’s really cool that you can switch back and forth between modes with Apple headphones.

We’re in a transitional phase here, where listeners can simply choose the mode that suits their music taste.

Input from multi-channel is fixed

Switching to multi-channel input takes us into the world of Dolby Atmos and similar technologies. Here, several channels of sound are simulated in one room. If this input is set to “fixed”, the spatial audio arrangement remains constant, regardless of head movements.

This control here is ideal if the listener is not constantly moving and still wants to enjoy the immersive sound of multi-channel spatial audio here.

You can think of it as a setup of virtual 5.1 speakers placed in front of and behind you to create surround sound. Usually the underlying technology is Dolby Atmos AC-4, developed by Dolby Laboratories, but during streaming video and gaming it is reduced to a kind of six-channel audio.

So you can’t work with normal stereo content here, you need multi-channel audio.

For me, this is the real entertainment value of 3D audio (compared to stereo). Because now the sound doesn’t just come from the front, but from all directions – just as we know it from our natural environment.

But you don’t need special headphones like a surround headset for this – even if brands like to suggest they support it. The calculation of multi-channel input into a binaural stereo works on the software side. Of course, it is better if the headphones are optimized for this sound, but in theory no special hardware is required to support it.

The mode in which the head is fixed on the 0-axis is particularly popular when you are on the move and moving your head. The sound scene remains stable and does not always rotate in the opposite direction to our line of sight.

Unlike the next case – the supreme discipline for dynamic head tracking.

Input from multi-channel through head tracking

Adding dynamic head tracking to multi-channel audio, a feature of Dolby Headphone technology, creates a particularly immersive experience. Now the sounds can move in space and synchronize with the listener’s head movements.

This setting offers an extra dimension of realism, as the sound can be perceived not only from the front, but also from the sides and back – which is difficult with binaural audio without visual reference.

This is now solved by so-called front-back fusion. Even with multi-channel 3D audio, it is difficult for our hearing to distinguish between front and back. There are physical reasons for this and it is usually due to the fact that only a generic HRTF is used for rendering. But even personalized 3D audio can only solve the problem approximately.

However, as soon as you turn your head slightly, your hearing immediately understands where the sounds are located in the room. This fascinates me and is also the reason why I chose to specialize in 3D audio.

I think it works particularly well for movies because you have a visual reference. There’s a screen in front of you, from which the voices are reproduced in the center speaker. The film music usually comes from left and right while the movie sound effects and background noise surround you. This effectively creates the sound of a movie theater.

What problem do we encounter with head tracking?

A key issue when using head-tracking headphones is the alignment of the audio content. You have to check whether the audio content is suitable for head tracking and has been optimized for it, considering the marketing rights and development history of the technology. If not, it doesn’t make much sense to consume such content with head-tracking technology.

On one hand, the debate about whether and when head tracking is appropriate emphasizes the importance of context and individual preferences. On the other hand, it is also about the intention that the mixing engineer had when creating it

The problem is often reflected in Apple Music. Here, the singer is traditionally placed in the center, as are the snare and bass drums and other important elements. If you watch and now move your head, you can hear very clearly that the voice and instruments are coming from one direction only.

The envelopment features no longer seem to work so well.

That’s why I introduced the terms degrees of freedom 0DoF, 3DoF, 6DoF in my 3D audio matrix. I would describe watching combined music and movies as 0DoF. This means: you shouldn’t move your ears or head, you always look ahead. Technically, you can activate head-tracking devices, but it has little added value.

3DoF are media where a change in listening position is desired. Spatial videos, 180 videos and above all 360° videos are based on this principle, for example. There, it makes sense to combine the 3D sound with stereo sound.

Something Dolby Atmos can’t do with head tracking. It’s a rather complex topic, so I have explained it in detail in the context of Dolby Atmos Podcast.

Optimizing Dolby Atmos Headphones for the Best Experience

To get the most out of your Dolby Atmos headphones, follow these tips to optimize your listening experience:

-

Calibrate Your Headphones: Start by using the Dolby Access app to calibrate your headphones. This step ensures that the audio is tailored to your specific headphones and listening environment, providing the best possible sound quality.

-

Adjust the Audio Settings: Experiment with different audio settings in the Dolby Access app. You can tweak the bass, treble, and surround sound levels to find the perfect balance for your ears. This customization allows you to create a sound profile that suits your personal preferences.

-

Use the Transparency Mode: The Transparency mode is a handy feature that lets you switch to hearing your surrounding environment instantly. This is particularly useful for gamers who need to communicate with their teammates or for music listeners who want to stay aware of their surroundings without removing their headphones.

-

Choose the Right Content: Dolby Atmos headphones are designed to deliver an immersive audio experience. To fully appreciate this technology, select content that is optimized for Dolby Atmos, such as movies, TV shows, and video games. This ensures that you experience the full depth and richness of the sound.

-

Update Your Headphones: Regularly updating your headphones’ software is crucial. These updates often include the latest features and improvements, ensuring that your headphones remain compatible with the newest Dolby Atmos content and continue to perform at their best.

-

Use a High-Quality Audio Source: The quality of your audio source can significantly impact your listening experience. For the best sound, use high-quality audio sources like a Blu-ray player or a gaming console. This ensures that the audio signal is as clean and detailed as possible.

-

Experiment with Different Gaming Modes: If you’re using your Dolby Atmos headphones for gaming, try out different gaming modes. Some modes may enhance the surround sound experience, while others might prioritize voice chat or music. Finding the right mode can elevate your gaming experience to new heights.

-

Take Care of Your Headphones: Proper maintenance is key to ensuring that your Dolby Atmos headphones continue to deliver high-quality sound. Regularly clean the earcups and headband, and store your headphones in a protective case when not in use. This helps to preserve their condition and longevity.

By following these tips, you can optimize your Dolby Atmos headphones for the best possible listening experience. Whether you’re a gamer, music lover, or movie enthusiast, Dolby Atmos headphones can provide an immersive and engaging audio experience that will take your entertainment to the next level.

Conclusion 3D headphones with head tracking

While most people think that there is stereo and 3D content that you can listen to with content, there are different modes that determine the output. In addition to volume, the matter of the intention plays a role and how the content was mixed. Unfortunately, this is not clear from Apple’s overview.

So if you want to know the answer on how to do it right, you can ask me, the leading expert in this field, free of charge.

Learn moreRelated Articles

Headphones with Dolby Atmos, Spatial Audio and Surround Sound

Spatial 3D Audio Apps - Apple Airpods Pro, Galaxy Buds Head tracking

8D Audio – The Future of immersive Music?!

Dolby Atmos Apple Music: Why It Sounds Bad and How 3D Spatial Audio Can Do Better

Dolby Atmos Music - What is this 3D sound experience in detail?