Apple Spatial Audio Format (ASAF) Plugins in Pro Tools

Content

Need Help with ASAF? Find it here

Apple’s new Spatial Audio tools look polished from the outside, but anyone who has tried to produce real content with them knows the gaps immediately: unstable exports, missing headtracking, chaotic scene/object behavior, APAC errors and no clear guidance on how to deliver for Vision Pro or Quest 3.

If you want a setup that actually works instead of days of trial-and-error: Here’s where to get expert support.

Why we need ASAF instead of Dolby Atmos

I took a first look at the new Apple Spatial Audio plugins for Pro Tools. No headphones and no headset needed today. This is about what is in the box, how to get started, and which functions stand out at first sight.

ASAF dynamically adjusts the soundstage based on both the location of on-screen objects and the listener’s position.

I have a project in the pipeline where I am supposed to mix with Apple Spatial Audio. In this project, the production process will focus on creating immersive audio tracks that fully utilize Apple Spatial Audio’s capabilities.

ASAF is built to expand on Dolby Atmos and rival emerging systems like Eclipsa. Apple continues to support Dolby Atmos, suggesting both systems will coexist.

This is where I will dive deeper with real material, exploring how the plugins support the creation and production of spatial audio tracks. ASAF introduces a new codec called APAC (Apple Positional Audio Codec).

The production process requires audio professionals to fine-tune mixes for head-tracking and directional audio without compromising compatibility on non-Apple platforms, including for streaming delivery.

Today I explain why I am curious, how I move through the interface, and what each module does.

How to download the Installer Plug-In from Apple Developer

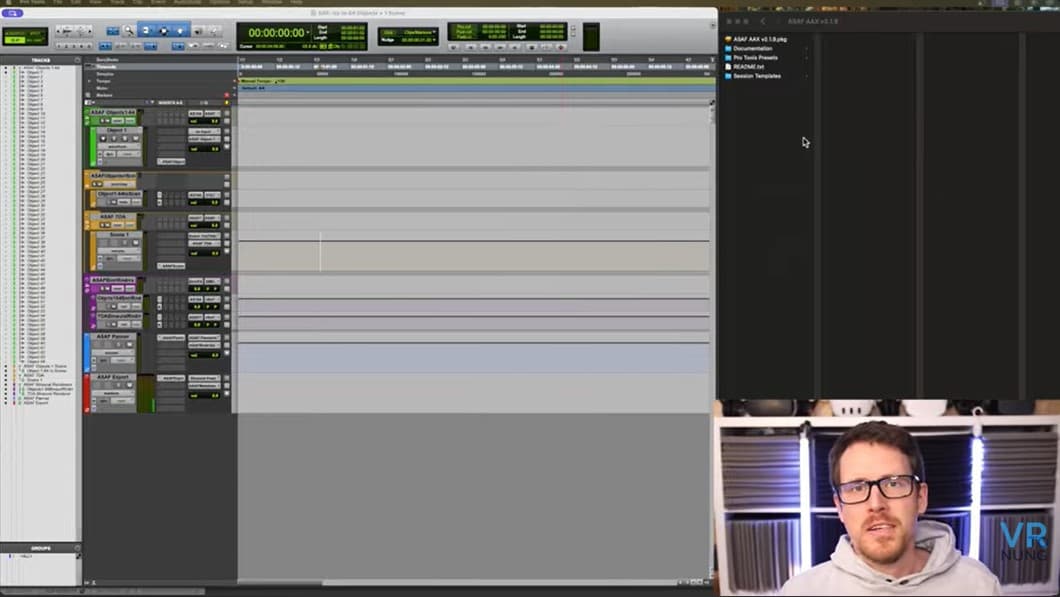

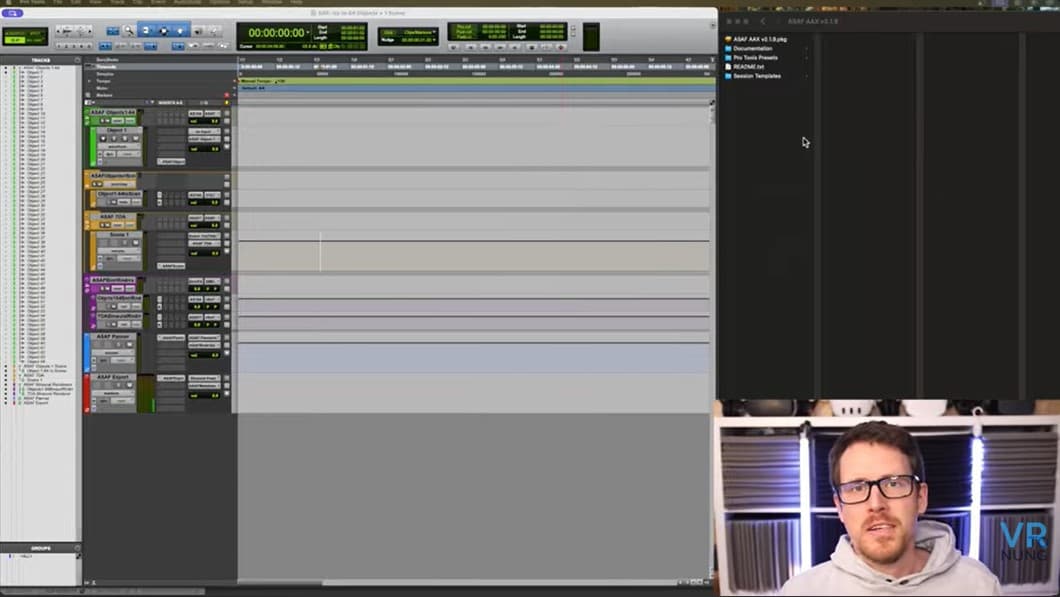

I download the plugins from the Apple homepage Just click here and open one of the included Pro Tools session templates. There are Pro Tools presets and session templates.

The template scales up to 256 objects. Setup in Pro Tools is its own thing because you route through seventh order Ambisonics and buses. You cannot simply define an arbitrary bus size.

This is not Reaper. Logic Pro also supports immersive audio workflows, offering an alternative DAW for users interested in authoring, mixing, and rendering spatial audio content. The template already contains several objects that feed routing folders.

I proceed step by step and begin with object one.

Audio format and compatibility

The Apple Spatial Audio Format (ASAF) is Apple’s answer to delivering truly immersive audio experiences across its ecosystem. Built on the foundation of Dolby Atmos, this advanced spatial audio format takes immersive audio to the next level by adding features like head-tracking, environmental reverb, and dynamic object positioning.

With ASAF, creators can design high-resolution spatial audio scenes that place listeners right in the heart of the sound, whether it’s for music, video, or interactive content.

ASAF is designed for seamless compatibility with most Apple platforms, including iOS, tvOS, macOS, and is especially optimized for Apple Vision Pro and visionOS.

This means that whether you’re mixing in Pro Tools, editing in DaVinci Resolve Studio, or delivering content for Apple TV, you can count on a compelling immersive experience that adapts to the listener’s environment and position.

The format’s integration with Apple Spatial Audio technology ensures that acoustic cues are rendered accurately in real time, making every sound element feel lifelike and externalized.

For audio professionals, ASAF offers a powerful new spatial renderer that supports object positioning, higher-order ambisonics, and dynamic audio rendering.

The format is flexible enough to handle numerous point sources, allowing for the creation of complex, high-resolution sound scenes. It also supports linear PCM audio and introduces a new metadata structure, ensuring that every detail of the mix is preserved for delivery purposes.

What the object panner does

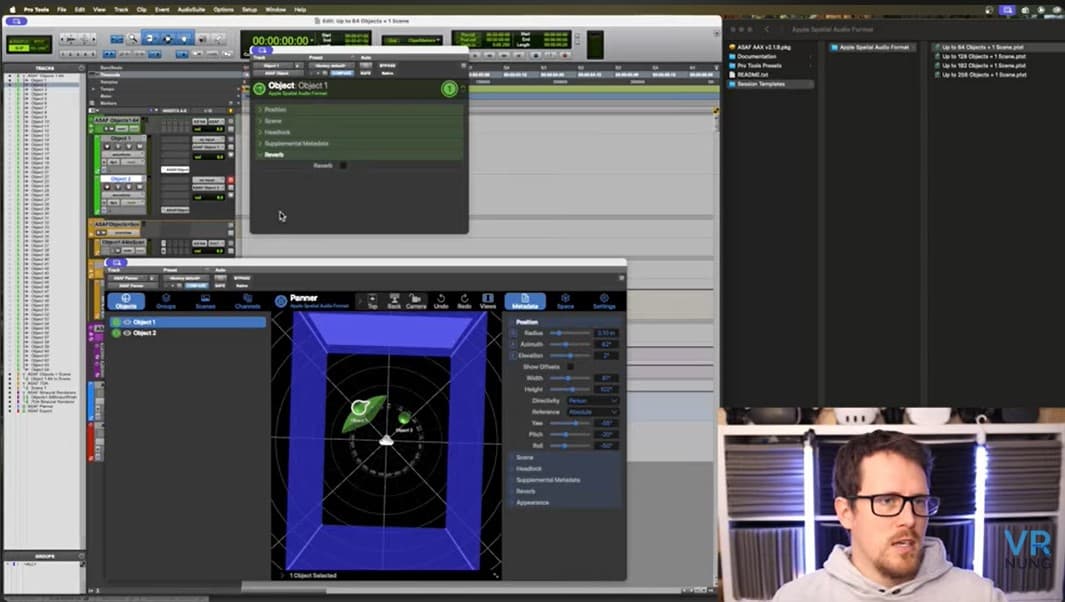

The coordinates are spherical. There is azimuth, radius, and elevation. The radius behaves like distance. Width and height can be set. The visualization reflects the changes even if it is not always obvious what exactly is shown.

The object panner gives users the ability to precisely control object position and place sound elements in three-dimensional space, enhancing the realism of the sounds.

I open the large control panel called the ASF panel. When I change azimuth on the object I can see the movement in the big panner. With multiple active objects I can move them together. Sometimes panning hangs briefly but it is not a big issue.

Directivity at object level

There is directivity. It recalls late features from the Facebook 360 world but goes further here. You can switch between person, singer, and studio monitor.

This changes how the sound travels in space. Higher frequencies show more directivity while bass is hard to localize. Directivity and orientation impact the perceived volume and bring the audio to life, as the experience changes depending on the listener position within the environment.

For object one I pick the person preset for testing. I can set the direction the person faces. I can adjust axes for orientation and switch between absolute and origin based modes.

Origin means the orientation points to the center. If several sources should point in one direction the other mode can be useful.

Headlock and binaural

Headlock is available. I see an option to externalize or not externalize headlocked audio. Usually a headlocked stereo track has no spatialization. Here you can also process a binaural signal as headlocked.

You can apply the same spatial effect to free 3D objects and to headlocked elements. That recalls possibilities from Dolby Atmos VR years ago. There are supplemental metadata tags such as effects, dialogue, and narration.

These tags matter during export because you can output categories separately. Metadata coupled with headlocked and binaural processing ensures that audio is accurately rendered when playing back immersive content, so that the sound adapts dynamically to user movement and spatial positioning for a realistic experience.

Reverb inside the object and the group structure

Each object can enable a reverb and mix it internally. You can automate and set the reverb level inside the object instead of using sends. In the main overview I see all objects, I can group them, enable or disable groups, and switch between objects, groups, and scenes.

Scene and scene renderer

There is a scene workflow. You can convert objects into a scene and add room behavior. Scene is a concept in Apple Spatial Audio that combines scenes and objects. How both relate in detail is something I will study later.

The scene renderer takes between six and sixty four objects and converts them to a scene. Camera views can be switched. Top view, back view, and other perspectives are available.

I can set field of view, rotation, elevation, and room. Materials can be selected. The settings will look familiar to users of DaVinci Resolve where Apple Spatial Audio tools are already integrated.

You can choose between movie and music. You can switch from spherical to cartesian display. There are modes for virtual reality, virtual reality with speakers, and virtual reality with headphones.

The scene workflow is completely adaptive based on the user’s environment and listener position, dynamically adjusting in real time.

This represents a new format for immersive audio experiences, with Apple’s ASAF extending beyond Dolby Atmos to enhance spatial audio on supported devices.

Head tracking and HRTF

There is a head model with tilt, turn, and center functions. I can enable head tracking and use different trackers. AirPods with head tracking can be used. There is OSC support for open trackers. HRTF selection is available. You can use the Apple system HRTF or load a personalized file.

Scene module, filters, and visualizer

In the scene module I find functions familiar to Ambisonics users. Seventh order Ambisonics is supported. There are metadata options and gain controls.

A spatial filter is a standout feature. It lets you boost or attenuate activity inside a defined window. I can position the filter freely and use a rectangular window. Multiple filters can be combined.

There are mirror functions and switches for front and back as well as up and down. I can rotate the entire scene. There is a visualizer that can overlay the image.

Video player and overlay

The new video player opens directly. I can drag and drop a 360 degree video. The video can be rotated. That helps with rotated material or changing orientation.

The field of view can be changed. That lets you crop for 180 degree use cases. I do not see a direct option for 3D stereoscopic video. In the object overlay I can pan, set opacity, and lock axes.

Multi selection is possible. Grid and extra information can be toggled. There are image conversion settings. As soon as a video is loaded a timecode appears and playback can be synchronized with the session or offset as needed.

Users can play immersive audio content in sync with the video, ensuring that audio tracks and visuals are seamlessly aligned for an optimal experience.

Object renderer for monitoring

The object renderer collects all objects and renders them. There is stereo for speakers and binaural for headphones. For multichannel there is no simple preset list.

You can create a custom layout and use the documentation for configuration. Common formats like five point one or seven point one point four are not offered as direct presets. The setup is possible but requires manual work.

The object renderer can also encode immersive audio using specialized codecs such as the Apple Positional Audio Codec (APAC) or other platform-agnostic codecs, ensuring compatibility with Dolby Atmos audio and other advanced spatial audio formats.

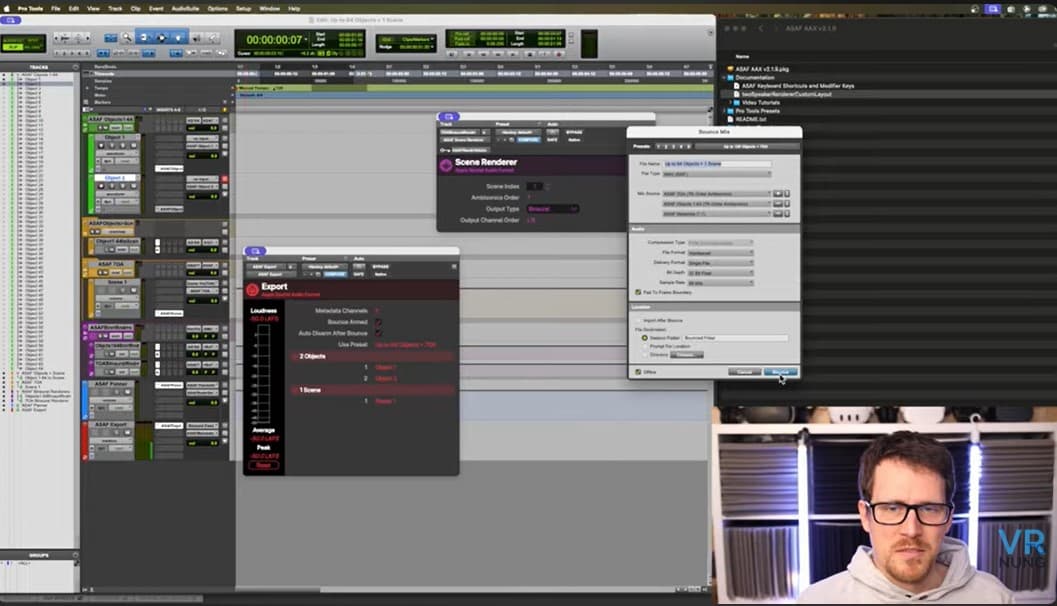

Export and merging with video

Export works via a marked time selection. I mark the objects for bounce. There are presets from first order up to many objects and seventh order. After the bounce a conversion window opens.

In the end I get an MP4 file. The final immersive audio file is created and encoded in the Apple ASAF format, ensuring optimal playback and advanced spatial audio experiences on Apple devices.

That file can be merged with the video to produce a spatial video with spatial audio. I miss the simplicity of the old Facebook 360 encoder. The current Apple workflow feels less direct. For now that is cumbersome for me.

What I will do next

This overview shows what is inside the plugins. The tools look promising. Notably, Apple quietly revealed its new spatial audio format, with key contributions from Blake Gordon, one of Apple’s immersive audio engineers.

I have a concrete project where I will use them in practice. Then there will be real listening examples instead of pure clicking.

I am curious which questions come up and which functions actually save time in a mix. I welcome feedback and requests. I will share more insights once I work with real material.

Apple Spatial Audio Format – Expert Support

Many people jump into Apple Spatial Audio and immediately realize how far theory and practice drift apart. The official plugins are new, the documentation is incomplete, and the workflow seems to shift every week. Anyone trying to start a real project hits the same recurring obstacles:

Exports fail on Apple Vision Pro or Quest 3

DaVinci Resolve appears to be the only working path for the ASAF.mp4 workflow

Headtracking doesn’t respond with AirPods or OSC

Objects, scenes, and metadata behave unpredictably

APAC throws errors without explanation

ADM or Dolby Atmos workflows don’t translate cleanly

These issues drain time, energy, and deadlines. That’s where I step in: I set up reliable workflows, resolve common misunderstandings, and build a stable pipeline with you—from the session to the final immersive deliverable.

If you need support that goes beyond the basics and saves you from endless trial-and-error: Here you’ll find 1:1 support for the Apple Spatial Audio Format.

Related Articles

Apple Spatial Audio - Which device or tool gives you the 3D effect?

Apple VR headset - All Spatial Audio features from Vision pro

Apple’s Spatial Video works best with Spatial Sound

Comparing of Apple Vision Pro vs Meta Quest 3 with spatial audio

Dolby Atmos Apple Music: Why It Sounds Bad and How 3D Spatial Audio Can Do Better

Spatial 3D Audio Apps - Apple Airpods Pro, Galaxy Buds Head tracking